Iron Man meets Star Trek: Space diving suit in development

May 29, 2013

Science fiction may well become reality with the development of a real lifeIron Man suit that would allow astronauts or extreme thrill seekers to space dive from up to 62 miles (100 km) above the Earth‘s surface at the very edge of space, and safely land using thruster boots instead of a parachute. Hi-tech inventors over at Solar System Express (Sol-X) and biotech designers Juxtopia LLC (JLLC) are collaborating on this project with a goal of releasing a production model of such a suit by 2016. The project will use a commercial space suit to which will be added augmented reality (AR) goggles, jet packs, power gloves and movement gyros.

Science fiction may well become reality with the development of a real lifeIron Man suit that would allow astronauts or extreme thrill seekers to space dive from up to 62 miles (100 km) above the Earth‘s surface at the very edge of space, and safely land using thruster boots instead of a parachute. Hi-tech inventors over at Solar System Express (Sol-X) and biotech designers Juxtopia LLC (JLLC) are collaborating on this project with a goal of releasing a production model of such a suit by 2016. The project will use a commercial space suit to which will be added augmented reality (AR) goggles, jet packs, power gloves and movement gyros.Déjà vu anyone?

So where have we seen this before? If you are a Trekker, you will remember the scenes from 2009's Star Trek (The Future Begins) where James T. Kirk, Hikaru Sulu and Chief Engineer Olson performed a space dive to the Narada's drill platform. They jumped from a shuttle craft above planet Vulcan wearing high tech suits and used parachutes to land on the rig. “Super” Trekkers will also know about the space dive scene cut from the 1998 Star Trek Generations movie and the holodeck simulated "orbital skydiving" in Star Trek Voyager (Episode 5x03), also in 1998.

More recently the Iron Man movies have highlighted Tony Stark, a fictional comic book hero, who invents and uses a powered exoskeleton-like armor that defines him as the super hero “Iron Man." The key elements of Stark’s suit are the jets situated in the boots and the repulsors located in the gauntlets. The repulsors in the 2008 movie are used as a form of propulsion and as steering jets, though they can also be used offensively. The helmet, with projected holographic heads-up display (HUD) and HAL-like artificial intelligence butler JARVIS (Just a Rather Very Intelligent System), tops off the outfit.

In real life we have Felix Baumgartner, an Austrian skydiver, daredevil and BASE jumper who set a world record for skydiving an estimated 24.24 miles (39 km), reaching a speed of 843.6 mph (1,357.64 km/h), or Mach 1.25, on October 14, 2012. His jump from a helium balloon in the stratosphere set the altitude record for a manned balloon flight, parachute jump from the highest altitude and greatest free fall velocity. His suit was designed to provide protection from temperatures of -90° to +100° F (-68° to 38° C) and was pressurized to 3.5 pounds per square inch or roughly equivalent to the atmospheric pressure at 35,000 feet (10,668 meters).

The challenges of space diving

The scientists and developers at Sol-X and JLLC are working on a suit that will enable space divers to jump from the Kármán line, which lies at an altitude of 62 miles (100 km) above sea level. This will involve descending through the vacuum of space, which is quite a different challenge than a dive that begins in the relative thickness of our planet’s lower atmosphere.

In order to achieve their goals, the team must overcome many technical difficulties. The suit must be protected against hostile temperatures, pressures and lack of oxygen. At the heights involved, low pressure may cause decompression sickness or ebullism. There is also the possibility of a suit breach which would cause the space diver to lose both oxygen and protection. Even though supersonic speeds will be achieved, more oxygen must be carried for a longer descent even if not needed.

The suit must be capable of withstanding the heat of re-entry and supersonic and hypersonic shock waves. Furthermore, G-forces are also in play. As the space diver slices through the thin atmosphere to the denser air below, it is possible they would experience positive or negative G-forces from 2-8, which may cause pressure-related complications or even black-outs. Spinning out of control, which actually occurred for roughly 10 seconds during Felix Baumgartner’s descent, can cause blood to pool in the extremities, possibly causing hemorrhages or unconsciousness.

RL MARK VI Space Diving Suit

According to Sol-X, its RL MARK VI Space Diving Suit would allow high-altitude jumps from near-space, suborbital space, and eventually low-Earth orbit itself. The acronym RL recognizes Major Robert Lawrence (RL) from the United States Air Force. He was America’s first African-American astronaut and was killed on December 8th, 1967 in a test flight at Edwards Air Force Base in California.

The yellow real-life prototype Iron Man suit alongside Felix Baumgartner's Red Bull Stratos suit (Photo: Blaze Sanders/Solar System Express/Red Bull Stratos)

Sol-X intends to commence in similar fashion to the Red Bull Stratos jump by first testing the suit with lower-altitude jumps and parachute descents, but the final goal is far more ambitious. Through the use of wingsuit technology and specially-designed boots with miniature aerospike engines attached, the space diver will end his spectacular jump with a glide to Earth and a power-assisted vertical landing. At least, that's the plan.

New York-based Final Frontier Design is working with Sol-X on a customized version of its low-cost Intra-Vehicular Activity IVA 3G spacesuit, successfully crowd funded last year through an online Kickstarter campaign. Lightweight layers of aerogel and Space Shuttle-like flexible insulation blankets will serve as the spacesuit’s outermost protective thermal layer, with Sol-X currently in talks with several wingsuit manufacturers to assist in merging their technology with the RL MARK VI Space Diving Suit .

Juxtopia’s AR Goggles

Juxtopia’s AR Goggles work on the principal of “Optical See-Through," similar to the HUD on a fighter jet, with numerical information and other visual data overlaid on the pilot’s outside views. Similar also in function toGoogle Glass, the AR Goggles are first and foremost intended to provide the space diver with a constant stream of vital information to assist in course direction and maintaining the dive within the specified safety parameters.

Real-time dynamic analytics keep the diver advised of heart rate, respiration and internal/external space suit temperatures. The display will provide data on rates of acceleration and deceleration, GPS location, and elevation, plus an FAA radar display of the local airspace. The design of the goggles includes voice control to turn the RL MARK VI’s systems on and off, eject spent hardware components from the diver’s body at different altitudes, manipulate suit cams and lighting, and to control verbal communications to ground control.

Mock up HUD display of the hi-tech augmented reality goggles (Photo: Blaze Sanders/Juxtopia)

Gyroscopic boots and power gloves

The gyroscopic boots will perform two vital functions. At 62 miles (100 km) high there are no aerodynamic forces acting upon the diver’s body that will assist them in stabilizing the dive. The gyroscopes built into the boots will provide a stabilizing mechanism to maintain a balanced and optimum attitude during descent from the thermosphere down to the stratopause. A further safety feature known as a “flat spin compensator” will kick in if the diver loses control of his attitude for more than five seconds.

The other main function of the diver’s gyroscopic boots will kick in as he nears the surface of the Earth and he fires off his miniature in-built aerospike thrusters to gently descend to the ground for a feet-first perfect landing. The controllers for the gyroscopic boots will be built into “power gloves” for ease of access.

Preliminary CAD design of the RL MARK VI "rocket boots" (Photo: Blaze Sanders/Solar System Express

Gravity Development Board

A Gravity Development Board (GDB), a proprietary piece of hardware designed by Sol-X, will serve as the main interface between the MARK VI’s three major components and will control all critical systems.

According to Blaze Sanders, Chief Technology Officer of Sol-X, “The GDB will be the first space-rated open hardware electronic prototyping board, enabling any type of person to create space qualified hardware. The GDB will replace the Arduino Uno as the preferred high-level prototyping environment."

The final frontier

Testing the suit at altitude should begin around July of 2016 with 1.25 mile-high (2 km) parachute jumps from a helium balloon and tethered tower. No firm dates have been set for suborbital and orbital testing, but initial plans call for the use of a robot (under development) supplied by Juxtopia to be used as the test subject for the first few jumps. Thrill-seeking adventurers will just have to enjoy their "orbital skydiving" via the big screen for a while longer.

The following video highlights space diving's potential.

Source: Solar System Express

3D Printed Food??

Just when we started getting used to the idea of printers spitting out objects, it turns out we have to wrap our heads around the idea of printing edible food. It's no gimmick -- NASA is even making room in its tight budget to fund a project that could lead to a whole new way of feeding astronauts. For their proof of concept, the researchers printed out a nice layer of chocolate.

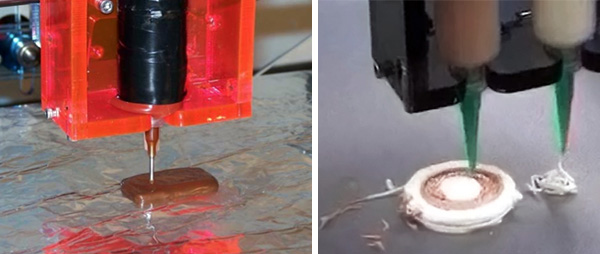

Examples of the device in use, printing chocolate and frosting shapes

In space no one can hear you call out for pizza, but technology being developed in a NASA-funded project might let astronauts print one instead -- or any number of potentially delectable meals.

Systems and Materials Research Corporation received a US$125,000 grant from NASA to build a prototype device that prints food.

The project, led by mechanical engineer Anjan Contractor, is still quite a ways from the replicator technology of Star Trek, but it could be the next step in providing sustenance for those planning to leave the Earth's orbit.

"There is serious work being done on this end," said Chris Carberry, executive director of Explore Mars. "This is being developed very much to help get us to Mars. It certainly is one step that is necessary in getting there."3D Printing of Food

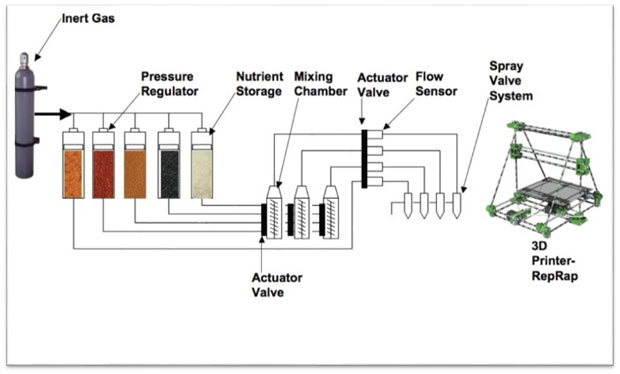

The technology will utilize progressive 3D printing and inkjet technologies; SMRC will design, build and test a complete nutritional system for long-duration missions beyond low Earth orbit.

To illustrate the concept, Contractor has envisioned a test system that could print a pizza. For starters, the printer would create a layer of dough, which would be cooked while printed. Then tomato powder would be mixed with an oil and water solution to create the sauce. A topping could include a nondescript protein layer.

"Printing food this way could be a pretty big deal for long-term stays in space and Mars," said futurist Glen Hiemstra, "and also for us here on Earth."

3-Step Process

The process of getting NASA funding for the project is almost as complex as printing 3D food.

"We are in negotiations to sign a contract," said NASA spokesperson David Steitz. "The first step is to apply for the Small Business Innovation Program, which program has three stages. The first is the feasibility study, where we look at the proposal. ... We then award a contract to build a prototype -- and finally, the third part is to partner with a third party to build a business that can become a NASA contractor."

SMRC has passed the first milestone and has received a $125,000 grand for six months to deliver that feasibility study based on the concept. The company could then apply for a $750,000 grant, which would give it two years to develop a prototype and would lead to actually testing the food with astronauts.

Schematic of SMRC's 3D Food Printing Process (SMRC)

Weight Savings

One of the biggest reasons for developing a printed food solution is weight -- not the type that's carried around waistlines, but the type filling spacecraft cargo holds.

"One of the challenges we face with deep space exploration is weight and mass in terms of the size of the craft and the fuel used," Steitz told TechNewsWorld. "It is one thing to go up to low Earth orbit, such as the International Space Station, where basic materials such as food can be resupplied. But this isn't the case with missions to Mars or the asteroids."

The important areas of research thus continue to be in human life support systems, including everything from air to water to food. Contractor is just one of many innovators addressing a big problem.

"We have some of the smartest minds in house working on food storage, but with all innovation required to get us out there, you can't just rely on your brightest minds alone," said Steitz.

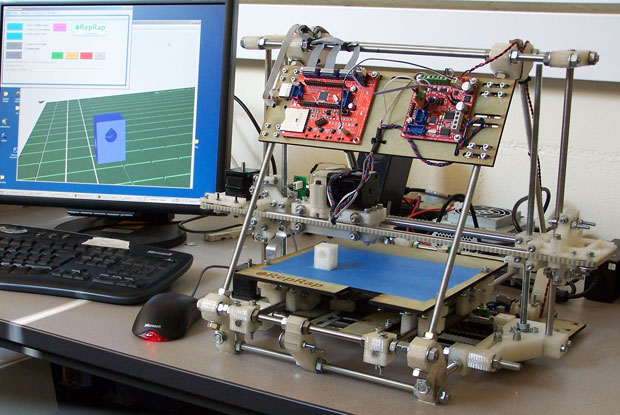

Open source RepRap 3D printer hardware will be the basis for Anjan Contractor's NASA-funded 3D food-printer project. The printer pictured is RepRap's Mendel.

3D Parts Printing

While this latest research could change the way people eat in space, 3D printing could have applications well beyond the table.

"In 2015, we'll bring a 3D printer to the space station, which will be the first time we'll even test added manufacturing in space," Steitz noted. "We've looked at this for spacecraft parts that in the past had been created by hand. So we have a lot of experience with added manufacturing and 3D printing technology."

NASA's goal is to eventually print a whole spacecraft -- and to do it in space rather than on Earth. This could allow for significant design changes.

Printing Money

Whether it's the spacecraft itself, its parts, or the food that astronauts eat, added manufacturing means money saved.

"Right now, it costs about (US)$10,000 per pound to take something just into low orbit," said Steitz.

"Therefore, on long missions, we have to improve the sustainability with air, water and food -- and 3D printing provides a great potential for that," he added.

"The potential benefits would be that the form and fit in the delivery system could be addressed, as well as the inclusion of critical nutrients such as protein," Steitz added. "There are obviously issues to be worked out, such as what it would taste like and what would it look like, but we'll get there."

You’ve probably pointed out the face on the moon, or noticed that a puffy cloud looks exactly like Jeff Goldblum from a certain angle. If you like finding human faces in inanimate objects, you are in for a real treat: Artists from a Berlin-based generative design studio called Onformative created a project called “Google Faces,” and it unearths unnervingly human mugs on various surfaces around the world using facial recognition software and Google Maps.

Onformative describes the Google Faces project as “an independent searching agent hovering the world to spot all the faces that are hidden on earth.” The Facetracker tool used to scan the globe for things that look like faces is still working, so the results aren’t complete yet, but what they’ve pulled up so far is pretty uncanny and may give you night terrors.

Even though the results are a little eerie, the methodology the Onformative team implemented to find these faces is impressive. They used Google Maps and applied a facial recognition algorithm using a facetracking library created by Jason Saragih. And the results speak for themselves.

Cedric Kiefer, one of Onformative’s founders explains the inspiration behind Google Faces. “The basic idea came while working on another project using face tracking technology,” he tells us. “At the beginning we had a high rate of false positives, the detection of a face when there is none.”

“We asked ourselves why that happened and what causes the computer to see a face in something else. A phenomenon that is called Pareidolia, the tendency to see faces in clouds or nature. For example the ‘Face on Mars’ taken by Viking 1 on July 25, 1976 is a good example for that phenomenon. We were curious to find out how the psychological phenomenon of Pareidolia could be generated by a machine and started to scan Google Maps to find such faces hidden in landscape.”

The project was not without its challenges, though. “Technically, the one challenge was the communication of our face recognition robot and Google Maps.”

Kiefer gave us a run-down of exactly how Google Faces works. “Our system mainly consists of two parts, an internet page containing nothing else then a full screen of Google Map hosted on one of our servers and a standalone application, the bot, browsing this internet page day in and day out. The bot continuously simulates clicks to move and zoom to the next desired image – making snapshots and running face recognition software.”

“In order to calculate the next position, it needs to keep track of the position it is looking at and the places it already traveled. Therefore the robot needs to calculate correct algorithmical steps and requests to navigate intelligent around the world and stay in tight communication exchange with the Google Maps API.”

But the biggest challenge for Onformative was the sheer number of scannable images. “Although the bot was running non stop for several weeks, we just travelled a small part of the world in different zoom levels so far,” he says. “So we don’t have any future plans what we could possibly do with the bot beside letting him run for some more month. Who knows what else he will find out there.”

We asked Kiefer if Google Faces could possibly search for more narrow facial features – for instance, only faces with mustaches, or images of Jesus (there’s a million dollar idea in the making). So far, the technology can’t winnow things down that far. “Searching for a special face, a face with a mustache or even an image of Jesus would require a different approach that is not possible with the current version of this software,” Kiefer explains.

So you can’t scour Google Maps for Elvis’ face on a chip just yet, but the technology is getting there. In the meantime, check out Onformative’s photo gallery of some of the most interesting faces they’ve found by scanning our planet.

Read more: http://www.digitaltrends.com/social-media/google-faces-the-worlds-creepiest-map-using-facial-recognition-software/#ixzz2U8qYy1ui

Follow us: @digitaltrends on Twitter | digitaltrendsftw on Facebook

Though somewhat time consuming and a bit of a brain drain, robot building can also be rewarding and fun. For those just starting out, however, the prospect of simultaneously playing the roles of designer, tinkerer, programmer and troubleshooter in order to breathe life into a pile of wires, motors, plastic and metal might be just a little overwhelming. Linkbots offer a gentler learning curve with a modular platform that starts with a single working unit, and grows into more complex robots by attaching accessories and connecting other units.

The Linkbot platform was developed by UC Davis Department of Mechanical and Aerospace Engineering professor Harry Cheng and a former graduate student named Graham Ryland, while the latter was studying for his master's degree. Within the polycarbonate housing of each Li-ion battery-powered 10-oz (284-g) modular robot sits an ATmega128RFA1 microcontroller from Atmel with integrated 2.4 GHz radio transceiver, that makes it ZigBee wireless-capable with a 100-m (328-ft) line-of-sight range. It also features multicolor LEDs and a buzzer, physical controls, and a built-in 3-axis accelerometer.

Three of its faces are home to a mounting surface, allowing users to snap connect modules together to build a bigger, badder, meaner Linkbot monster, or attach components like legs, joints, grippers, and even camera mounts. The current accessories are 3D-printed, and the developers have made all of the necessary files available for download and home printing. Users will be able to upload their own designs and share with other Linkbot users. Each mounting surface also sports #6-32 bolt patterns to further extend accessory attachment possibilities.

There's a rotating hub on either side that's reported capable of delivering 100 oz-in (7.2 kg-cm) of torque. The supplied firmware can accurately rotate the motors between zero and 120 degrees per second, with up to 300 deg/sec possible by tweaking the code. This should be enough for walking motion using accessories or connected Linkbots for limbs, as well as driving on wheels. Power supplied to the motors is kept steady courtesy of an integrated voltage regulator circuit.

Something dubbed BumpConnect allows Linkbot modules to wirelessly connect to each other with NFC-like ease. One bot can then be used to remotely control others in TiltDrive mode, which involves tilting the unit back, forward, left or right to issue cable-free speed and direction commands. There's another mode which sees any paired unit following the movements of one master, and another that makes it possible to program movement patterns using your hands instead of a keyboard.

The latter is called PoseTeach and, after physically connecting the bot to a computer running some special BaroboLink Windows/Mac/Linux software, the recorded motions are automatically converted into code. More advanced tools are on offer to enable students of Linkbotics to use different programming languages to control the robots. It's also possible to flash custom firmware onto a Linkbot, so advanced users can extend functionality beyond what's already provided by Barobo (the company formed by Cheng and Ryland to market the technology).

"The C and Python libraries enable you to access every last input and output on the Linkbot, from the battery voltages to the accelerometer readings, LED colors, and even reprogramming the buttons to execute C/Python code," says the company's software engineer. "As an example, one could do some simple room/maze mapping by using the accelerometer readings as a simple 'bump' detector. Or, the robot could be programmed to open blinds if it senses motion triggered by, say, a cat or a sleeping person."

Linkbots are Arduino-compatible for more advanced hacking, and an optional Bluetooth 2.0-capable expansion board for attaching sensors or external devices can be plugged into a module through an I2C bus/phone plug on the top face.

The tech has already found its way into the hands of local schoolchildren, but to get the system into the homes of consumers, the Barobo team has headed to Kickstarter, where a modest funding target of US$40,000 has been set. The first 50 single Linkbot units (with two wheels and eight mounting screws) have all gone, but another 50 have been made available for pledges of $139 or more. After that, the cost rises to $169. If you want that Bluetooth expansion board, too, you'll need to stump up at least $199.

A single Linkbot is ready to roll, turn, crawl, stand, and tumble out of the box, but if you think that one module won't be enough to satisfy your creative needs, the first double Linkbot kit has a $349 funding level. This comes with four wheels, a bridge bone accessory, 16 mounting screws and a snap connector. Other pledge levels are available for multiple bots and accessory packs. The funding campaign runs until June 18.

The Kickstarter pitch video outlines the main features and educational goals of the Linkbot project.

Sources: Barobo, Kickstarter

Aeryon Labs recently unveiled its latest compact UAV, the SkyRanger, which deploys within seconds from a backpack and boasts a new airframe that can remain aloft in high winds and extreme temperatures

Many UAV drones have issues when it comes to strong winds and adverse weather, but if you're a soldier needing a birds-eye view, you don't always have time to wait for the sky to clear up. Aeryon Labs' SkyRanger UAV deploys from a backpack within seconds and boasts a new airframe that can remain aloft in high winds and extreme temperatures.

Aeryon designed its new small Unmanned Aerial System (sUAS) as a portable surveillance tool built especially to handle rough weather conditions. In the air, it remains stable in sustained winds of 40 mph (65 kph), but is able to endure 55 mph (90 kph) gusts without any issues. The entire device is also ruggedized and weather-sealed to protect it from minor bumps and moisture, and it remains operational in temperatures ranging from -22 to 122º F (-30 to 50º C).

The UAV was made for field work, so most of its external pieces (battery, arms, legs, etc.) can be replaced without any tools, and the fully-assembled quadcopter weighs only 5.3 lbs (2.4 kg). In flight mode, it measures 40 x 9.3 in (102 x 24 cm), but the arms and legs fold down to a much more compact 10 x 20 in (25 x 50 cm). When folded, the quadcopter's appendages protect its camera or payload and allow it to slip into an accompanying backpack for quick access later.

Once deployed, it can take off and land vertically and is able to remain in flight for 50 minutes on a single charge. A lone operator pilots the SkyRanger with a tablet-like Ground Control Station using simple touch controls to program a flight path or aim an on-board camera.

Aeryon Labs has also included an optics system that houses both an EO camera that captures 1080p30 HD video and 15 MP photos, and an IR camera that records 640 x 480 video and still images. The controls and images are broadcast through a 256 bit AES-encrypted network with a range of 1.9 mi (3 km) beyond line-of-sight.

The company recommends the SkyRanger mostly for military and security purposes, from gathering covert intelligence to thwarting pirates at sea. The new UAV is now available to order, though you'll have to contact Aeryon Labs to receive a quote, and the company has stated it is giving priority to military and government customers.

Check out the video below to see how the Aeryon SkyRanger can go from a backpack to an airborne set of eyes in just a few seconds.

Source: Aeryon Labs

No comments:

Post a Comment